How to Leverage Amazon’s New OpenAI Products on AWS: A Practical Playbook for Small Businesses and Law Firms

For small businesses and boutique law firms, AI is shifting from “interesting” to “operational.” The game-changer: Amazon and OpenAI have expanded their partnership so you can now access OpenAI’s latest models directly inside AWS, with the same identity, security, and compliance controls your team already trusts. That means faster pilots, simpler procurement, and lower risk—without rebuilding your stack. In this guide, you’ll learn what Amazon’s new OpenAI offerings on AWS include, why they matter now, and exactly how to implement them—step by step—to streamline client intake, automate document workflows, and unlock measurable ROI within 90 days. (openai.com)

- What’s new: OpenAI on AWS—what it actually includes

- Why this matters for small businesses and law firms

- High-impact use cases and quick-start playbooks

- Reference architectures that work on day one

- Implementation roadmap: 30/60/90 days

- Compare options: Bedrock vs. Direct OpenAI API vs. Azure OpenAI

- Conclusion and next steps

What’s new: OpenAI on AWS—what it actually includes

On April 28, 2026, OpenAI and Amazon Web Services announced that OpenAI’s models—including the newest GPT‑5.5 family—are available natively in Amazon Bedrock. In addition to text and multimodal models, the offering introduces OpenAI’s code-generation capabilities (Codex) and managed/agentic features that help automate multi-step tasks inside your AWS environment. These services are delivered with AWS-native controls like IAM policies, VPC networking, KMS encryption, CloudTrail logging, and Bedrock guardrails—removing friction for security and compliance reviews. (openai.com)

“Amazon will soon be making OpenAI’s models available directly on Amazon Bedrock.” — Andy Jassy, Amazon CEO, April 27, 2026. (apnews.com)

Why it matters: many small organizations already standardize on AWS for identity, networking, and data governance. Now you can adopt OpenAI’s capabilities without standing up new identity providers, reinventing secret management, or routing sensitive documents through third-party gateways. Axios reported the shift followed changes to OpenAI’s Microsoft relationship, opening the door for multi-cloud distribution and allowing AWS customers to use OpenAI where they already operate. (axios.com)

Why this matters for small businesses and law firms

For owners, partners, and operations leaders, the breakthrough isn’t just model performance—it’s operational fit. Running OpenAI models through Amazon Bedrock lets you apply the same AWS controls you already use to protect client files, health records, or financial documents. You gain:

- Stronger governance-by-default: Enforce IAM least-privilege, route traffic over PrivateLink, encrypt data with AWS KMS, and audit with CloudTrail—without bolt-on tooling. (openai.com)

- Simpler procurement and compliance: Use existing AWS contracts, Business Associate Addendums, and standard security reviews to accelerate approvals.

- Operational speed: Provision Bedrock in minutes; connect to S3 and enterprise search; then compose agents that safely call internal tools using Bedrock’s server-side tool use. (aws.amazon.com)

- Cost control and observability: Centralize metering and usage alerts through AWS billing, and log every interaction for defensibility.

Bottom line: You can move from a risky “shadow AI” pattern to a well-governed, production-grade pattern—without pausing momentum. TechCrunch and OpenAI both frame this as the starting line for a broader collaboration, not a one-off feature drop, so expect rapid iteration. (techcrunch.com)

High-impact use cases and quick-start playbooks

For law firms

- Client intake triage and conflicts check: Use a secure chat workflow where staff upload engagement emails and prior matters. The model summarizes client needs, flags potential conflicts (by comparing entities against your matters database), and drafts a tailored intake checklist. Pair Amazon Kendra for entity-aware search across your DMS, and route structured outputs to your practice management system via AWS Lambda.

- Document drafting with firm voice: A retrieval-augmented generation (RAG) assistant pulls from templates, prior filings, and style guides in S3 to draft motions, engagement letters, or discovery requests. Include citations to source passages and a “red flag” section for risky language.

- E‑discovery summarization and timelines: Ingest productions into S3, chunk with AWS Glue and Bedrock knowledge bases or Kendra, then generate chronologies, issue maps, and deposition questions with linked references.

- Time entry and billing QA: Transcribe calls/hearings, extract tasks + durations, map to UTBMS codes, and flag anomalies before invoices go out.

For small businesses and professional services

- Customer support copilot: Draft and route responses based on product manuals, policies, and past tickets; escalate with structured reasoning steps logged to CloudWatch for QA.

- Marketing and sales enablement: Generate on-brand proposals and one-pagers from CRM opportunities; enforce tone/claims guardrails with Bedrock safety settings; push approved assets to your CMS.

- Operations automation: Read invoices, match POs, reconcile variances, and post to your accounting system via a server-side tools agent—no API keys in browsers. (aws.amazon.com)

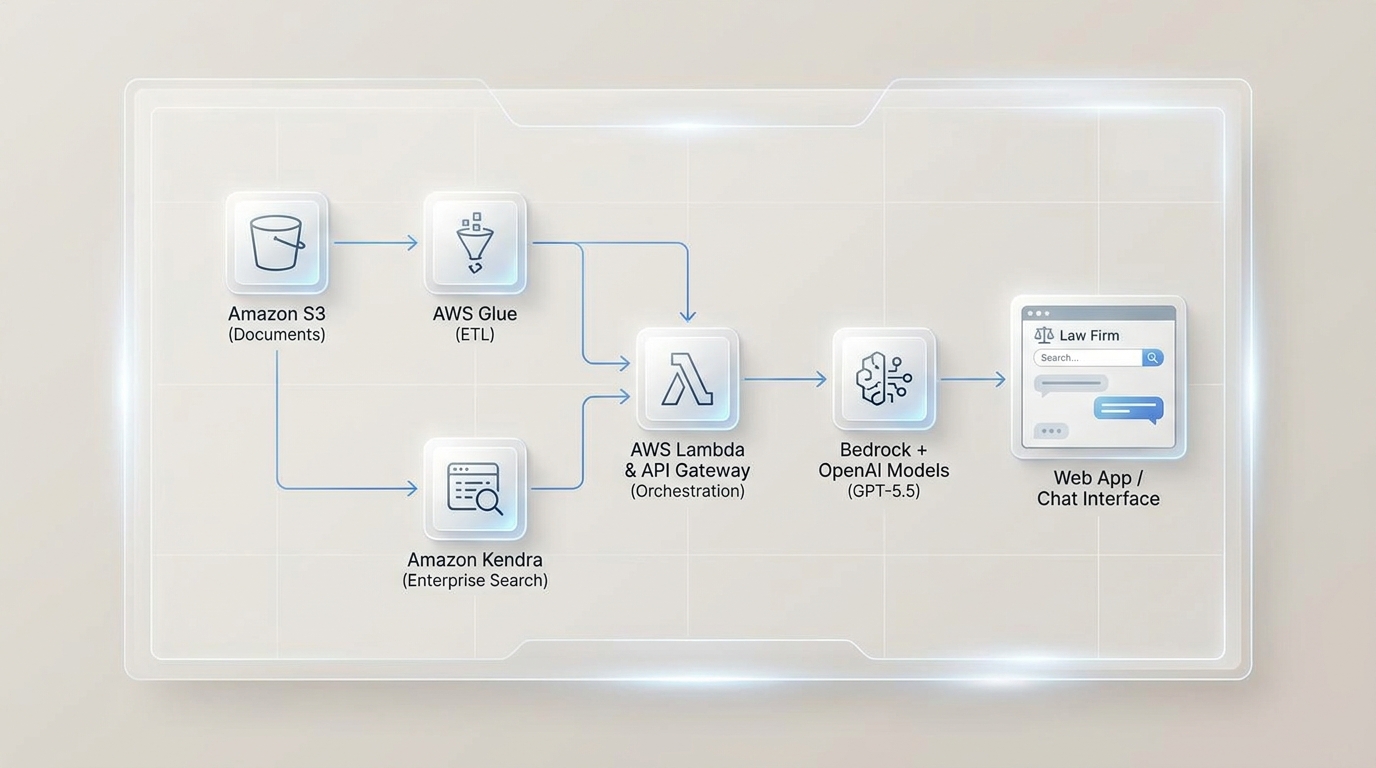

Reference architectures that work on day one

Below are proven, low-friction patterns that align with legal and small-business constraints.

1) RAG assistant for documents you already have

- Store documents (matters, contracts, SOPs) in versioned Amazon S3 buckets with KMS encryption and object-lock (immutability) where required.

- Index with Amazon Kendra or Bedrock knowledge bases; apply document-level ACLs to respect ethical walls.

- Orchestrate a server-side agent via Bedrock Responses API tool use to call search, database lookups, calendaring, and e-sign actions—executed inside your AWS account. aws.amazon.com

- Present a web chat front end through API Gateway and Lambda, or integrate straight into your DMS/PMS.

- Govern via IAM; route over VPC endpoints/PrivateLink; log to CloudTrail; apply Bedrock guardrails for safety, PII masking, and content boundaries. (openai.com)

2) Code & IT automation with OpenAI Codex

Use Codex to generate internal scripts, transform spreadsheets, or build small, domain-specific apps (e.g., client intake forms with validation). Keep execution in Lambda or containerized behind your VPC; enforce review gates (pull requests, automated tests) before production deployment.( techcrunch.com)

3) Managed agents for multi-step business tasks

Define skills like “draft-engagement-letter,” “update-CRM,” or “create-invoice.” Provide tool schemas that map to Lambda or Step Functions. The agent plans, executes, and verifies steps, with secure, auditable calls. This is ideal for paralegal/ops tasks that blend retrieval, drafting, and system updates. (openai.com)

Implementation roadmap: 30/60/90 days

First 30 days: establish a compliant foundation

- Decide regions and data boundaries: Confirm where Bedrock + OpenAI models are available and align with client/regulatory requirements. Add PrivateLink, VPC endpoints, and KMS keys per data-classification policy. (openai.com)

- Set up accounts and roles: Create a dedicated Bedrock execution role with scoped permissions; separate dev/test/prod.

- Stand up observability: Enable CloudTrail and CloudWatch metrics/alarms for API usage, errors, and cost anomalies.

- Define data retention: Set S3 lifecycle policies; configure immutability for evidentiary records as needed.

- Draft a Responsible AI policy: Clarify approved use cases, forbidden inputs (e.g., client secrets), and human-in-the-loop review rules.

Days 31–60: ship two production-grade pilots

- Pilot A—RAG knowledge assistant: Index 5–10k core documents (templates, memos, policies). Implement source citation, tone control, and red-team prompts to test guardrails.

- Pilot B—Operations automation agent: Build a server-side agent that extracts invoice data, maps to your GL, and posts entries via a Lambda tool. Start in “suggest-only” mode before auto-posting. (aws.amazon.com)

- Measure impact: Track draft time saved, review defects, and cycle time from request to deliverable.

Days 61–90: scale and harden

- Expand coverage: Add additional practice areas or business functions. Graduate pilots into a single “AI Desk” with request routing.

- Fine-tuning or preference optimization: Where generic prompts stall, run reinforcement fine-tuning (RFT) on Bedrock using OpenAI‑compatible APIs. Attach a Lambda-based reward function to nudge style and accuracy. (aws.amazon.com)

- Integrate QA: Establish automated prompt regression tests; add hallucination checks and PII scanners before responses reach end users.

Legal Ops AI Readiness Checklist (print and keep)

- Data governance: Classify data; define retention and deletion; confirm redaction policy for PII and privileged content.

- Access controls: Map roles to IAM; enforce MFA; restrict model access to least privilege.

- Network posture: Require VPC endpoints/PrivateLink for model traffic; disallow public egress where feasible.

- Auditability: Turn on CloudTrail; store logs immutably; test incident response for misrouted prompts.

- Quality gates: Human-in-the-loop review for all client-facing drafts until KPIs prove stability.

- Change management: Create a prompt/version registry; train staff; appoint an AI steward (partner/ops lead).

Getting started in 60 minutes (hands-on)

- In AWS, enable Amazon Bedrock in your target region and request access to OpenAI models; verify quota. (openai.com)

- Create a KMS key and an S3 bucket (versioned, encrypted).

- Set up an IAM role for Bedrock with S3 read and KMS decrypt permissions.

- Load 50–100 reference documents (templates, FAQs) into S3 and index with Kendra or a Bedrock knowledge base.

- Spin up an API using API Gateway + Lambda that accepts a question, retrieves context, and calls the OpenAI model in Bedrock.

- Enable CloudTrail and CloudWatch for logging, add usage alarms, and test with red-team prompts to validate guardrails.

Compare options: Bedrock vs. Direct OpenAI API vs. Azure OpenAI

| Decision factor | OpenAI via Amazon Bedrock | Direct OpenAI API | Azure OpenAI |

|---|---|---|---|

| Best for | Teams standardized on AWS needing IAM, KMS, VPC, CloudTrail, and PrivateLink controls | Fast prototyping or startups without strict cloud controls | Microsoft-centric orgs using Entra ID, Sentinel, and native Azure networking |

| Identity & access | IAM integration, role-based controls, AWS SSO | OpenAI keys; enterprise SSO requires extra work | Azure AD (Entra) integration |

| Network isolation | PrivateLink/VPC endpoints available | Public internet unless proxied | Private endpoints/VNET integration |

| Compliance & audit | CloudTrail logs; AWS Config; GuardDuty (optional) | Limited native cloud audit unless integrated | Azure-native audit/logging |

| Agentic features | Server-side tool use via Bedrock Responses API; managed agent patterns | Tool use available; server-side orchestration is DIY | Azure orchestration + Functions; varies by region |

| Procurement | Under existing AWS agreements | Separate vendor contract | Under Microsoft agreements |

| Model availability | OpenAI GPT models (including GPT‑5.5) and Codex, plus Bedrock’s broader catalog | All current OpenAI models | OpenAI models per Azure availability |

| Migration path | Keep data/tools on AWS; reuse logs, keys, and policies | Minimal platform lock-in, but fewer enterprise controls | Best for Azure-native orgs |

Note: Check the specific region and model SKUs before go-live; offerings evolve quickly. OpenAI and AWS state this is a starting point for deeper integration and continued model expansion on Bedrock.( openai.com)

Conclusion and next steps

OpenAI’s arrival on AWS turns AI from a side project into a secure operations capability for small businesses and law firms. You can now pair OpenAI’s frontier models with AWS-native identity, encryption, private networking, and audit logging—accelerating time to value while reducing risk. Start small: stand up a firm-voice drafting assistant and an operations agent that closes a real gap (intake, billing QA, or support). Measure cycle time saved, error reduction, and client satisfaction. Then scale intentionally—add documents, tools, and oversight as your outcomes justify. With the right guardrails, AI can quietly remove friction from your workday and let your team focus on higher-value judgment and client service. (openai.com)

Ready to explore how you can streamline your processes? Reach out to A.I. Solutions today for expert guidance and tailored strategies.